In many organizations, metrics such as time to market, productivity, delivery velocity, and cost are commonplace parameters utilized for measuring business performance. Individuals, teams, departments, organizations – everyone must strive to improve these values. Target metrics and the objective to meet or exceed these metrics is a central tenet of the concept of Continuous Improvement.

At PopUp Mainframe, we share that same vision – and to improve product efficiency and user experience, we strive to modernize mainframe systems as much as possible by embracing contemporary tools and technologies. For example, we use Ansible to enable automation, the PopUp Mainframe FastTrack facility to make the mainframe more agile and nimble with snapshot/switch capabilities, and Galasa CLI to support automated testing.

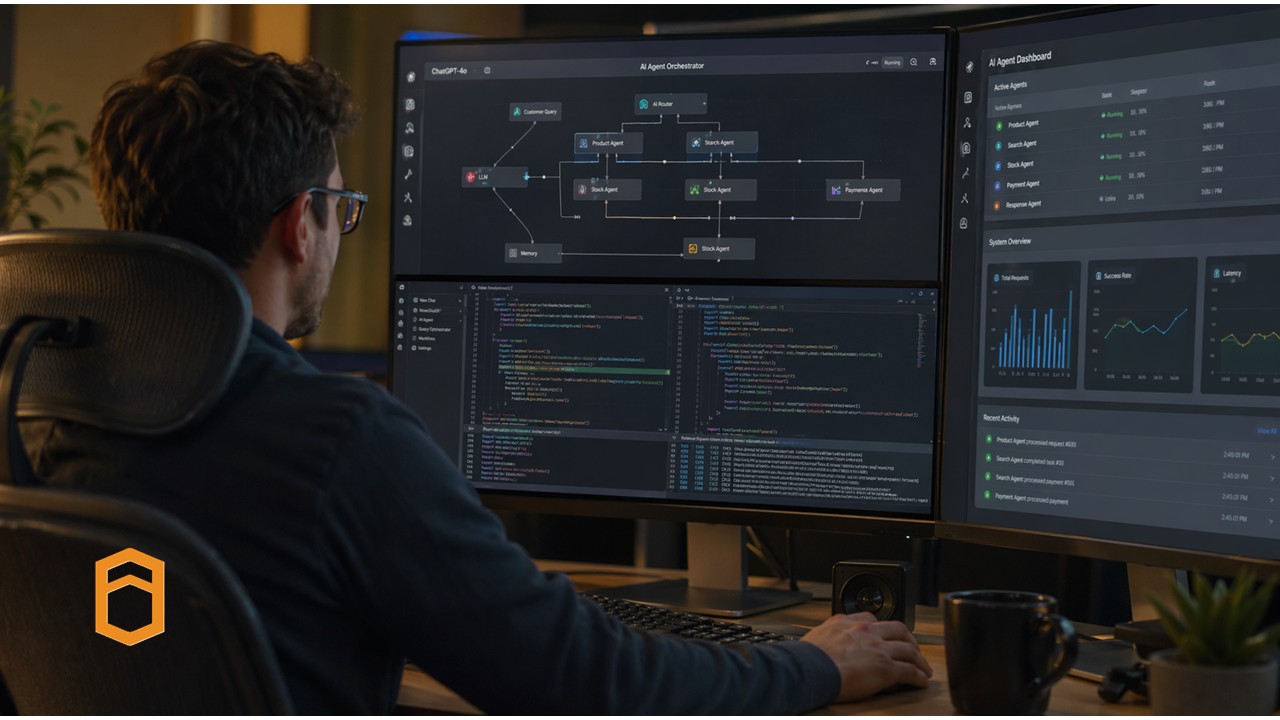

On similar lines, we have recently sought to harness Artificial Intelligence (AI) at PopUp Mainframe to utilize some of its incredible potential. We are working with a range of AI models as well as vendors specializing in AI for the mainframe.

First Impressions

One of the initial opportunities, we felt, was to help simplify the operational experience. With AI, we believe, the days of remembering every z/OS command syntax are firmly behind us. With the power of Large Language Models, all it takes is plain conversational language to execute day-to-day z/OS operations. Simply describe what you want to accomplish, and let AI handle the how part.

For seasoned professionals, this becomes a force multiplier OR a catalyst. Someone who deeply understands z/OS operations can simply stay focussed on the outcomes rather than remembering and executing multiple commands, parameters, dataset names etc.

What makes this even more powerful is the seamless experience that AI with PopUp Mainframe can deliver. There is no need to log in to the mainframe green screen, nor to a Linux VM host, just to carry out routine operations. Everything you need is accessible through a single, unified conversational interface, eliminating the friction of juggling multiple systems and sessions.

But perhaps the most transformative benefit is the simplification of hitherto specialist tasks. Tasks that once demanded a deep domain knowledge by Subject Matter Experts are now accessible to a far broader audience – junior operators, cross-functional teams, and newcomers to the platform can perform complex z/OS activities with the help of AI. This fundamentally shifts the skill threshold.

The ripple effect on organizations is significant: less dependency on a small pool of specialists, faster execution, lower training overhead, and meaningful savings in both time and cost. AI doesn’t replace expertise – it amplifies it and shares it.

AI-Powered PopUp Activities

Inspired by the above example, we have recently started using AI in the PopUp lab, and it is already helping us in many different areas. Here are a few examples:

- The PopUp Mainframe build process. As a software vendor, we regularly build new versions of our product. For every new version, we perform rigorous testing and validation for various sub-systems in PopUp – Db2, CICS, MQ, IMS, FTP, plus software versions, open-source tool connectivity validation, Spool Volumes storage, Started Tasks executions, SMS validations etc. Prior to using AI, we were using automation to do many of the validation steps (mostly using Ansible playbooks), however a lot of activities were still manual. The cost/benefit of automating all these steps didn’t weigh up so they remained manual. After exploring ways to accelerate our testing and validation process, we successfully integrated PopUp Mainframe with Anthropic’s Claude Code — enabling us to interact with both the Linux and z/OS layers entirely through natural language. With a simple, structured set of instructions such as “Submit XYZ job”, “Check if XYZ STC is running”, or “Generate a summary report”, we feed our prompt set directly to Claude and receive clear, user-friendly validation reports in return. This eliminated the need for both manual validation and traditional automation scripting, saving significant time and effort.

- UI Development. Building the new UI for PopUp Mainframe’s FastTrack facility showcased how transformative AI can be in modern software development. Using AI-powered tools like V0.dev, Lovable.dev, ChatGPT, Claude, and GitHub Copilot, what once took days of sketching mock-ups and writing boilerplate code was reduced to minutes. Describing a vision in natural language was sufficient to generate fully functional, production-ready layouts and components. These tools didn’t just accelerate delivery; they sparked a cultural shift within our team, inspiring curiosity and a new approach to building software. For more information, please see here.

- Documentation. We also used AI extensively to help us with documentation, and have found that AI can provide useful information from our z/OS systems and Z code to produce documentation from it.

Managing Risk

As AI becomes more deeply embedded in our workflows, safeguarding intellectual property and maintaining operational integrity will be vital.

As such, we are already building out our AI rules of engagement.

- We are very careful to ensure our source code is never exposed in plain text when working with AI tools. By keeping code in binary format rather than readable text, we significantly reduce the risk of sensitive IP being inadvertently processed, stored, or learned by AI models. This is a simple but critical safeguard that every team should consider.

- We do not handle sensitive customer data in our lab environment; however, we do recognise this may not be the case for everyone. We treat this as a reminder to always assess the nature of the data in your environment before engaging AI — and to ensure that any AI tooling used in production or customer-facing contexts complies with relevant data protection regulations such as GDPR.

- We have established clear AI usage guidelines that apply across all scenarios — whether a team member is using AI independently on their laptop or connecting it directly to a PopUp Mainframe instance. These guidelines are not a one-time exercise; they are reinforced through regular training as AI capabilities and risks evolve. Building a culture of awareness is just as important as having policies on paper.

- We take strict precautions to ensure that no user credentials with administrative access are ever included in prompts. Elevated privileges in the wrong context could expose systems to unintended actions, and AI is no exception to this risk. The principle of least privilege applies equally (especially!) when AI is in the loop.

- Before any prompt is executed, it goes through a review and validation step. We ensure prompts are specific, well-scoped, and unambiguous — avoiding broad or generic instructions that could lead to unpredictable outcomes. Vague prompts leave room for misinterpretation, and in a mainframe environment, the consequences of an unintended action can be significant. Inefficient prompts also utilise more AI credits, and the costs can quickly soar if not kept in check.

- We consciously limit the use of AI for any tasks involving deletion — whether it is removing TSO IDs, deleting datasets, or cleaning up files. Irreversible actions carry inherent risk, and AI introduces an additional layer of unpredictability that we are not willing to accept without robust human oversight. Where deletion tasks are necessary, human review and approval remain mandatory.

- Not every task is a good candidate for AI. Before delegating any activity to AI, we assess the criticality of the task, evaluate the potential risks, and weigh the cost-benefit carefully. This structured approach ensures that we are using AI where it genuinely adds value — and stepping back where human judgement and control are more appropriate.

The structure of prompts, unintended data exposure, over-reliance on AI output without validation, and the misuse of elevated access are all real threats that organisations must actively manage. Establishing clear policies, educating the team and industrialization of technical safeguards are few of helpers that allow us to harness AI’s potential with confidence.

A bright future awAIts

As an organization, our AI journey is only just getting started. We have already identified several high-impact areas where we believe AI can make a meaningful difference to our customers. Here are some areas under consideration for the future:

- PII Field Identification — Detecting personally identifiable information across datasets and databases to help customers meet compliance and data privacy obligations faster and with greater accuracy.

- Produce data architecture diagrams from Db2 Tables — Using AI to analyse Db2 table structures and generate visual architecture diagrams (a sort of reverse engineering), giving teams instant clarity on complex data landscapes without hours of manual documentation.

- Application Subsetting for Test Data Preparation — Creating representative subsets of applications and data to support faster, more efficient test cycles — reducing dependency on full production copies and accelerating delivery pipelines.

- Data Migration Support — Leveraging AI to assist in planning, mapping, and validating data migration activities, reducing risk and effort in one of the most complex and high-stakes mainframe tasks.

Final Thoughts – Putting AI into CI

At PopUp Mainframe, continuous improvement is not just a principle, it is how we operate every day. We are always asking how we can do things better than we did yesterday, and AI adoption represents one of the most significant leaps forward we have taken on that journey.

As a team, we have begun sharpening our prompt engineering skills, learning how to craft instructions that are precise, reliable, and optimised for the outcomes we need. This discipline is still evolving, and we recognise there is more to explore, refine, and master. Prompt engineering is fast becoming a core competency, much like coding or system design, and we are investing in it accordingly. The possibilities that AI unlocks for mainframe environments are vast — and we are committed to pushing those boundaries thoughtfully and responsibly. Check back soon for more AI innovation news from the PopUp Mainframe team.