Last year, the PopUp Mainframe team was delighted to attend, present, and exhibit at the GSE UK Conference 2024 – the region’s biggest and best mainframe community event. Rubbing shoulders with industry luminaries, technical experts, household name organizations, it was a fantastic experience. And this year, with a fresh new title of GS UK 2025, the conference looks set to be one of the best ever – we look forward to attending another fantastic event!

Here’s a quick recap of why the PopUp Mainframe team value the conference so much.

The need for speed – a mainframe market requirement

Mainframes remain the mainstay of enterprise computing. Industry reports (including our own from earlier this year) indicate continued reliance upon IBM mainframes across a variety of sectors, at some of the world’s largest and most successful organizations. Often part of a hybrid IT strategy nowadays, the IBM mainframe remains a central component of the organizational infrastructure, the applications it houses business critical in nature.

As important as it is, issues blight the mainframe environment, and none more so than the problem with bottlenecks (also reported in our survey). Mainframe delivery teams often suffer from the shortage of non-production environments for development, test, research, and training, impacting their ability to deliver as fast as the business would like.

Even with the advent of DevOps style tooling on the mainframe, the resource availability restrictions make genuine acceleration of delivery very tough.

Flexible mainframe delivery to match your imagination

We spoke last year of PopUp Mainframe’s breakthrough approach to providing readily-available, virtual mainframe environments to anyone who needs access, whenever they need it. PopUp Mainframe can figuratively pop-up in minutes to provide ready access for situations that demand it – scaling up test environment availability to run some resource-intensive or multi-user testing, to enable dev teams to collaborate on complex merges, to enable system administrators to validate fixes across different versions of the same sub-system, to offer ready access to new trainees before they ‘go live’ with their own LPAR access, to support an urgent application fix to commence while the usual LPAR is down for maintenance. The list goes on.

We were grateful for being invited to speak and for the lively discussion from the audience.

Increased PopUp Capability On Show

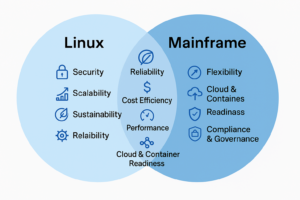

Since last year, we’ve released an updated product, widened the scope of our deployment offering to take in the IFL and LinuxONE, and we’ve added some key new capabilities to the product line.

And we’re pleased to return to the conference (now renamed GS UK) to widen the scope of our discussions based on these recent innovations. Join us to learn more about our latest innovations on Linux, with Ansible playbooks, with our new “FastTrack” facility, using mainframe open-source technology, and more.

Our busy conference speaking agenda looks like this.

Monday 3rd November

11am – More Horses! Low Linux on Z speeds up mainframe change

2.30pm – Open-Source Mainframe DevOps demonstration

3.45pm – Automate the heck out of every LPAR (using Ansible playbooks)

Tuesday 4th November

3.30pm – Mainframe IT Skills Overview – part of the WAVEZ 101 Track

4.45pm – Platform Engineering – a real-world use case

Wednesday 5th November

Midday – Market Survey Findings RoundTable (Executive Track – Invitation Only)

The full conference agenda is here.

Team Time

This is a real community show, and the attendee list reads like a who’s who in the UK mainframe world. From the major mainframe vendors such as IBM, Broadcom and BMC, to the crucial resellers, consultancies and service providers like TES Enterprise Solutions and Vertali, to the press presence of Planet Mainframe, to the notable end user organizations present, to the fantastic volunteers at the Open Mainframe Project, there’s an insightful, informative, conversation to be had every hour of the day.

Join Us

The mainframe community has redoubled its efforts in the last few years to engage more proactively and open its doors to the curious, and the Whittlebury Park conference is a real fixture of the mainframe community calendar.

Please make time to join us at one of our sessions or come and chat to us on the Expo floor (we’ll be hanging out with our partners, TES Enterprise Solutions at their booth).

We hope to see you there. If you haven’t yet registered – go here.

Further Reading For more information on how PopUp Mainframe can help revolutionize mainframe delivery in your organization, take a look at the web site, including this list of recent press articles.